The problem with not being worried about some new-fangled gizmo that you’re repeatedly being told to worry about is that it makes you look like a doddering, reactionary stick-in-the-mud. King Canute, swaddled in hubris, blind to the inevitable tide of progress. I get it; in today’s fraught attention economy, prognostication and hyperbole are simply more engaging than an impassive Gen-Xer pointing you towards the chillout tent.

Nevertheless, here I am, doing that. TLDR: if you’re running a DMO website and you’re worried about AI, you can stop worrying. About that, at least. Not everything else.

Here are a few reasons why –

- Conversational bots have arrived, but search is still growing and organic traffic is barely down. The modes are complementary, not competitive.

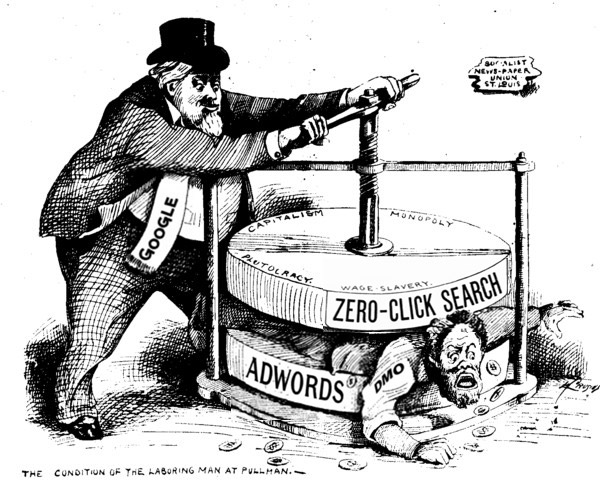

- The significance of the AI Overview is wildly overblown. ‘Tis no more than the latest SERP feature in a multi-decade trend towards zero-click search.

- AIO / GEO, to the extent it even exists, is a blip. It will merge seamlessly into SEO, if it hasn’t already.

- If your purpose is to convey information, does the point of transfer matter? Probably not as much as you think.

- Neither Google nor OpenAI want to kill you, because then they would have no-one to steal from. Nor can they replace your very particular set of skills. Cheer up: They are much more like parasites or racketeers than murderers.

Let’s tackle this piece by piece.

The Sky Isn’t Falling

To begin with, let’s get a sense of proportion about all this. Search volume actually climbed 0.4% in 2025, with Google reportedly exceeding 5 trillion prompts for the first time. Yes, organic traffic from search engines declined, but by a modest 2.5%, nothing like the catastrophic collapse heralded by and indeed sometimes misreported by the pundits (source: Debunking the myth that organic traffic has dramatically declined).

It’s certainly true that people are using AI assistants as part of their travel planning process. Future Partners have been tracking this through survey data since October 2023, and the percentage of travelers using AI tools to plan has been bouncing around 15% ever since. Even if you suspect the true number is higher – and given the increasing prevalence of AI overviews in Google SERPs, it may be – there is no reason to cast this mode of visitor research as substitutive rather than complementary to existing data sources. It’s a commonplace in our industry that travel planning is multi-touch. According to Expedia, today’s travelers visit, on average, 277 web pages before booking a trip. And that’s on top of research conducted on social media, YouTube etc… It feels oddly clingy to resent the sudden arrival of Claude in this orgastic future. You need to be less uptight.

Richard’s list of things that didn’t kill the web

- AOL

- Adobe Flash

- .com Bubble

- RSS

- Push Notifications

- Social Networks

- Mobile Apps

- Facebook Instant Articles

- Google AMP

- YouTube

- Voice Assistants

- The Metaverse

- AI Assistants [NEW!]

Agentic Web: So Last Decade

It’s usually at this point that people start telling me about the army of AI-agents that will, in response to our prompts, perform a multitude of simultaneous searches across the web and serve up their summarized findings in a content and context-aware interface. They then lower their voice to a conspiratorial whisper, “…this will make bots the primary consumers of web content, rather than human beings…”

Their portentous tones imply the worst, until you realize that what is being described is no more than the common or garden Search Engine Results Page, and that bots have always outnumbered humans on the web.

Yes, the chat window offers a new interface into search, one that supports natural language, much like Ask Jeeves was supposed to in 1997. But, as of the time of writing, AI-initiated search is estimated at no more than 5% of the overall market, pretty much equivalent to Bing, which nobody pays any attention to. When you observe ChatGPT crawling a bunch of websites in real-time in response to your prompt, it gives the appearance of innovation, but what you’re actually looking at is a spinner necessitated by the fact that OpenAI’s crawling and indexing technologies are vastly inferior to Google’s. When you ask Google the same thing, there is no delay, because Google indexed those sites before you asked the question.

The simple fact of the matter is that the Search Engine Results Page has always been an “AI overview”.

It is the nature of search engines to utilize vast amounts of compute to

- parse the web’s contents,

- infer the intent of user prompts, and

- generate the most relevant, usable and monetizable summary that matches the latter to the former.

Over time, those aggregation, inference, summarization and monetization algorithms have become increasingly sophisticated; the incorporation of Large Language Models is just the most recent innovation in a long line of improvements.

To be clear, I’m not saying it isn’t remarkable that we can chat with the web – clearly it is. I’m just saying it doesn’t really change anything.

“That’s all very well,” you might say, “but what about the traffic? If the overview answers the visitor’s questions, they won’t need to visit my website.”

Possibly, but, in this respect, AI overviews are no different from any number of zero-click search features: Featured Snippets, Instant Answers, Top Stories, Local Packs, Hotel Packs, Event Listings, “People Also Ask” blocks, etc.

It is, of course, irritating when Google shoplifts your lovingly curated content and hawks it on the street corner. But for those whose informational needs are shallow enough to be satisfied with a purloined tchotchke, the user experience is a positive one. Those who need more will click through.

The problem of reduced traffic is actually a problem of observability and instrumentation… we use pageviews as a proxy for the utility of our web content, and lack metrics to gauge the value of that content when consumed off-site. I have some suggestions as to how we might manage this later on, but we should be clear with ourselves that reduced website visits does not connote reduced impact. Zero-click search actually makes our information more accessible, more usable, just less measurable.

At root, AI assistants change discovery surfaces; they don’t abolish the need for authoritative local sources. The important thing is to hold on to what we’re here for – to help people make the most of their precious time off – and to remember what makes us special, which is our local expertise. Travel information decays quickly. If you stop publishing the good stuff, the visitor experience will suffer in real life, as well in SERPs.

How to Keep Calm and Carry On

1. Develop KPIs for off-site visibility

The reality of zero-click searches – of which AI overviews are just the trendiest example – means that your web content is being consumed off-site. This is a good thing (we could argue, convincingly, I think, not as good as being consumed on-site, but nevertheless significantly better than not being consumed at all), and it would be helpful to have some metrics to indicate how much good is being done.

An obvious place to start is the Total Impressions stat available through Google Search Console, which tells you how many times your content appeared in their SERPs, including AI overviews. Bonus: it’s a really big number. Bing’s Webmaster Tools are now even more helpful, and break out AI SERP performance specifically.

Next, using Google Analytics 4, you can measure how much traffic you’re receiving from ChatGPT…

page referrer contains chatgpt.comGiven a ~0.33% CTR from chatbot citations, you can back into a rough estimate of your visibility on that platform also.

To take things further, SEO toolsets such as Ahrefs provide metrics on the number of keywords that situate your content in specific SERP features, and the frequency with which your page content is cited in AI overviews generated from a (gigantic) set of standardized prompts.

2. Don’t let go of SEO

I mean, not that you were going to.

As I will expound upon at length in future posts, I’m not sure if any specific tactic outweighs SEO in importance in the albeit discreet realm of digital destination marketing.

In terms of the current discussion, not only does SEO drive traffic from the 95% of searches still conducted through “traditional” (non AI-assisted) means, that ranking connotes authority and increases the likelihood of that content being referenced or summarized in AI overviews.

Wait, don’t I mean GEO / AIO? Aren’t we supposed to be rewriting our content for more efficient ingestion and more straightforward quotation by the bots?

Actually, no.

I mean, you could, but the current recommendations for GEO, to the extent they differ from SEO (and by and large they don’t, very much), are simply reflections of current and temporary inadequacies of the (non-Gemini) AI algorithms to parse your content cleanly and often. They will get better, and they will likely get better much more quickly than you can rewrite your content and then rewrite it again when they improve.

Imagine you’ve written Anna Karenina, and you want to sell as many copies as possible, and 5% of your audience follow a 7-year-old influencer. You could butcher your masterpiece to meet the reading level of the influencer whose audience you’re trying to target. Or you could perhaps be patient, not worry too much about that 5% today, and in a few years’ time that influencer will be able to read your novel the way it’s written.

Danny Sullivan, the founder of Search Engine Watch and the ex “Search Liaison” for Google, has been pretty clear about this; optimizing for LLMs is a “grey hat” technique that will work only until the algorithms improve. A better strategy is probably the one you already have – to optimize for the same factors that Google optimizes its algorithm to meet: Experience, Expertise, Authoritativeness, and Trustworthiness.

AIO is a short-term bleeding-edge technique designed to squeeze an extra percentage point of traffic in the kind of commercial situations where that produces a positive ROI. As with all things at the nexus of DMOs and technology, you are not funded to work at the bleeding edge. And in terms of your quality of life, that’s probably a good thing.

3. Consider addressing the real threat: misinformation

In the event you’re not convinced by my suggestion to do nothing – or you are, but cannot convince your management of same, let’s think creatively… What if the thing you ought to be worried about isn’t dwindling website traffic, but misinformation? LLMs confidently disseminating errors that compromise the visitor experience at an industrial scale?

A few plausible examples:

- Hallucinated logistics: “The light rail runs 24/7” / “You can drive to Mount Hood in 45 minutes” / “This museum is free on Tuesdays” — all believable, inaccurate and potentially trip-ruining.

- Outdated reality presented as current: a restaurant that closed six months ago; a venue that changed hours; a trail that’s seasonally inaccessible; a policy that quietly shifted.

- Synthetic consensus: multiple AI-generated articles repeating the same incorrect claim until it looks widely corroborated.

The defense against this sort of accidental informational apocalypse is reassuringly boring:

- Be the canonical source of facts. Maintain a set of pages that are boringly factual and aggressively updated (transit basics, extreme weather alerts, event cancellations, road closures, trail conditions, safety advisories etc).

- Keep logistical data fresh and machine-readable. Include “last updated” dates and times on key info pages, and use structured data where appropriate. Maintain stable URLs.

- Write for bot citation, not the Pulitzer. Short paragraphs, unambiguous statements and specific numbers. Yes, I know I said not to bother pandering to the AIO vogue, but you wouldn’t listen, so here we are.

- Maintain a “known misconceptions” page, if you know of any. “No, you can’t drive to X in winter without chains…” etc.

The goal here isn’t to generate organic traffic. It’s to make your information the easiest, freshest truth for machines to ingest, thus reducing their capacity for mass, casual falsehood.

In Conclusion

So, will AI assistants kill DMO websites?

No, no they won’t.

But they will increase the area over which the content of those sites is distributed. Zero-click search results – of which AI Overviews are just the latest example – change the locus of information transfer to somewhere more convenient and less observable. For newspapers that have to monetize their traffic, this may be an existential threat. For DMOs, whose purpose is to help visitors have a better trip, the point of transfer matters less than the accuracy of the information transferred.

Whatever they may tell you, the next step is not to panic and pivot to AIO; it’s to persevere with workmanlike diligence on the fundamentals. And when you’re next invited to an AI-themed webinar, feel free to RSVP “Maybe,” then go back to fixing your event listings.

You’ll find me in the chillout tent.

Leave a Reply